Artificial intelligence imaging can be utilized to create artwork, strive on garments in digital becoming rooms or assist design promoting campaigns.

But specialists concern the darker facet of the simply accessible instruments may worsen one thing that primarily harms girls: nonconsensual deepfake pornography.

Since then, deepfake creators have disseminated comparable movies and pictures focusing on on-line influencers, journalists and others with a public profile.

Thousands of movies exist throughout a plethora of internet sites.

And some have been providing customers the chance to create their very own photos — primarily permitting anybody to show whoever they want into sexual fantasies with out their consent, or use the expertise to hurt former companions.

The downside, specialists say, grew because it turned simpler to make refined and visually compelling deepfakes.

And they are saying it may worsen with the event of generative AI instruments which can be educated on billions of photos from the web and spit out novel content material utilizing current knowledge.

“The reality is that the technology will continue to proliferate, will continue to develop and will continue to become sort of as easy as pushing the button,” stated Adam Dodge, the founding father of EndTAB, a bunch that gives trainings on technology-enabled abuse.

“And as long as that happens, people will undoubtedly … continue to misuse that technology to harm others, primarily through online sexual violence, deepfake pornography and fake nude images.”

The 28-year-old discovered deepfake porn of herself 10 years in the past when out of curiosity someday she used Google to look a picture of herself.

To today, Martin says she does not know who created the pretend photos, or movies of her participating in sexual activity that she would later discover.

She suspects somebody doubtless took an image posted on her social media web page or elsewhere and doctored it into porn.

Horrified, Martin contacted totally different web sites for a variety of years in an effort to get the photographs taken down.

Some did not reply. Others took it down however she quickly discovered it up once more.

“You cannot win,” Martin stated.

“This is something that is always going to be out there. It’s just like it’s forever ruined you.”

The extra she spoke out, she stated, the extra the issue escalated.

Some folks even advised her the way in which she dressed and posted photos on social media contributed to the harassment — primarily blaming her for the photographs as a substitute of the creators.

But governing the web is subsequent to inconceivable when international locations have their very own legal guidelines for content material that is typically made midway around the globe.

Platforms try and curb entry

In the meantime, some AI fashions say they’re already curbing entry to specific photos.

OpenAI says it eliminated specific content material from knowledge used to coach the picture producing device DALL-E, which limits the flexibility of customers to create these sorts of photos.

The firm additionally filters requests and says it blocks customers from creating AI photos of celebrities and distinguished politicians.

Midjourney, one other mannequin, blocks using sure key phrases and encourages customers to flag problematic photos to moderators.

Meanwhile, the startup Stability AI rolled out an replace in November that removes the flexibility to create specific photos utilizing its picture generator Stable Diffusion.

Those modifications got here following studies that some customers had been creating celeb impressed nude footage utilizing the expertise.

Stability AI spokesperson Motez Bishara stated the filter makes use of a mix of key phrases and different strategies like picture recognition to detect nudity and returns a blurred picture.

But it is potential for customers to control the software program and generate what they need because the firm releases its code to the general public.

Bishara stated Stability AI’s license “extends to third-party applications built on Stable Diffusion” and strictly prohibits “any misuse for illegal or immoral purposes.”

Some social media firms have additionally been tightening up their guidelines to raised shield their platforms towards dangerous supplies.

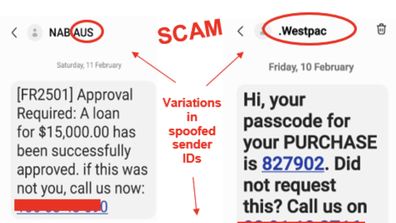

The textual content message to look out for that would trick nearly anybody

Previously, the corporate had barred sexually specific content material and deepfakes that mislead viewers about real-world occasions and trigger hurt.

The gaming platform Twitch additionally just lately up to date its insurance policies round specific deepfake photos after a preferred streamer named Atrioc was found to have a deepfake porn web site open on his browser throughout a livestream in late January.

The website featured phony photos of fellow Twitch streamers.

Twitch already prohibited specific deepfakes, however now exhibiting a glimpse of such content material — even when it is meant to specific outrage — “will be removed and will result in an enforcement,” the corporate wrote in a weblog publish.

And deliberately selling, creating or sharing the fabric is grounds for an on the spot ban.

Other firms have additionally tried to ban deepfakes from their platforms, however preserving them off requires diligence.

Research into deepfake porn will not be prevalent, however one report launched in 2019 by the AI agency DeepTrace Labs discovered it was nearly solely weaponised towards girls and probably the most focused people had been western actresses, adopted by South Korean Okay-pop singers.

Meta spokesperson Dani Lever stated in a press release the corporate’s coverage restricts each AI-generated and non-AI grownup content material and it has restricted the app’s web page from promoting on its platforms.

In February, Meta, in addition to grownup websites like OnlyFans and Pornhub, started collaborating in a web-based device, known as Take It Down, that permits teenagers to report specific photos and movies of themselves from the web.

The reporting website works for normal photos, and AI-generated content material — which has grow to be a rising concern for youngster security teams.

“When people ask our senior leadership what are the boulders coming down the hill that we’re worried about? The first is end-to-end encryption and what that means for child protection. And then second is AI and specifically deepfakes,” stated Gavin Portnoy, a spokesperson for the National Center for Missing and Exploited Children, which operates the Take It Down device.

“We have not.. been able to formulate a direct response yet to it,” Portnoy stated.

Source: www.9news.com.au